Adversarial Robustness 360 - Demos

Try these interactive demos to explore some of the capabilities of the Adversarial Robustness Toolbox.

Defend your AI model against attacks. Our open-source software library supports both researchers and developers in making AI systems more secure. Create and simulate attacks and different defense methods for machine learning models in this demo.

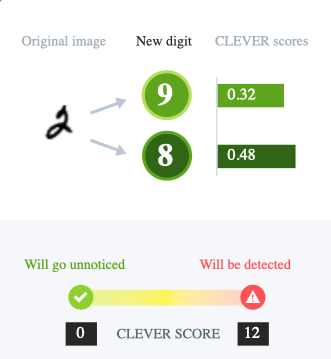

Can you take the bank to the cleaners? See how much extra cash you can clear by distorting digits on a check to fool a bank’s image processing system into misreading it. Then learn how IBM scientists assess how easy it is to fool different systems.

Hackers can use backdoors to poison training data and cause an AI model to misclassify images. Learn how IBM researchers can tell when data has been poisoned, then guess what backdoors have been hidden in these datasets.